This weekend, two men died in an unspeakable Tesla wreck. They drove a 2019 Model S off the road and hit a tree, then the electric car burst into flames and burned for hours, according to reporting by KPRC 2 in Houston. The most troubling detail from the incident is that, according to CNN, the police “investigators are certain no one was in the driver’s seat at the time of the crash.” One person was reportedly in the passenger seat, one was in the back row.

After reading this harrowing story on Monday, I clicked over to Instagram and searched the hashtag #Teslalife. The first video that popped up in the results, in the upper left-hand corner in the largest tile, was a repost from the TikTok account @tesla.tok, which has more than 247,000 followers. The short video loop shows a person driving a Tesla with Autopilot engaged and without their hands — so they can use them to eat a Chipotle burrito bowl — while a voiceover says, “This is why I got a Tesla … So I can use self driving to safely stuff my face [rolling on floor laughing emoji].” The post has over 3.6 million views at the time of writing.

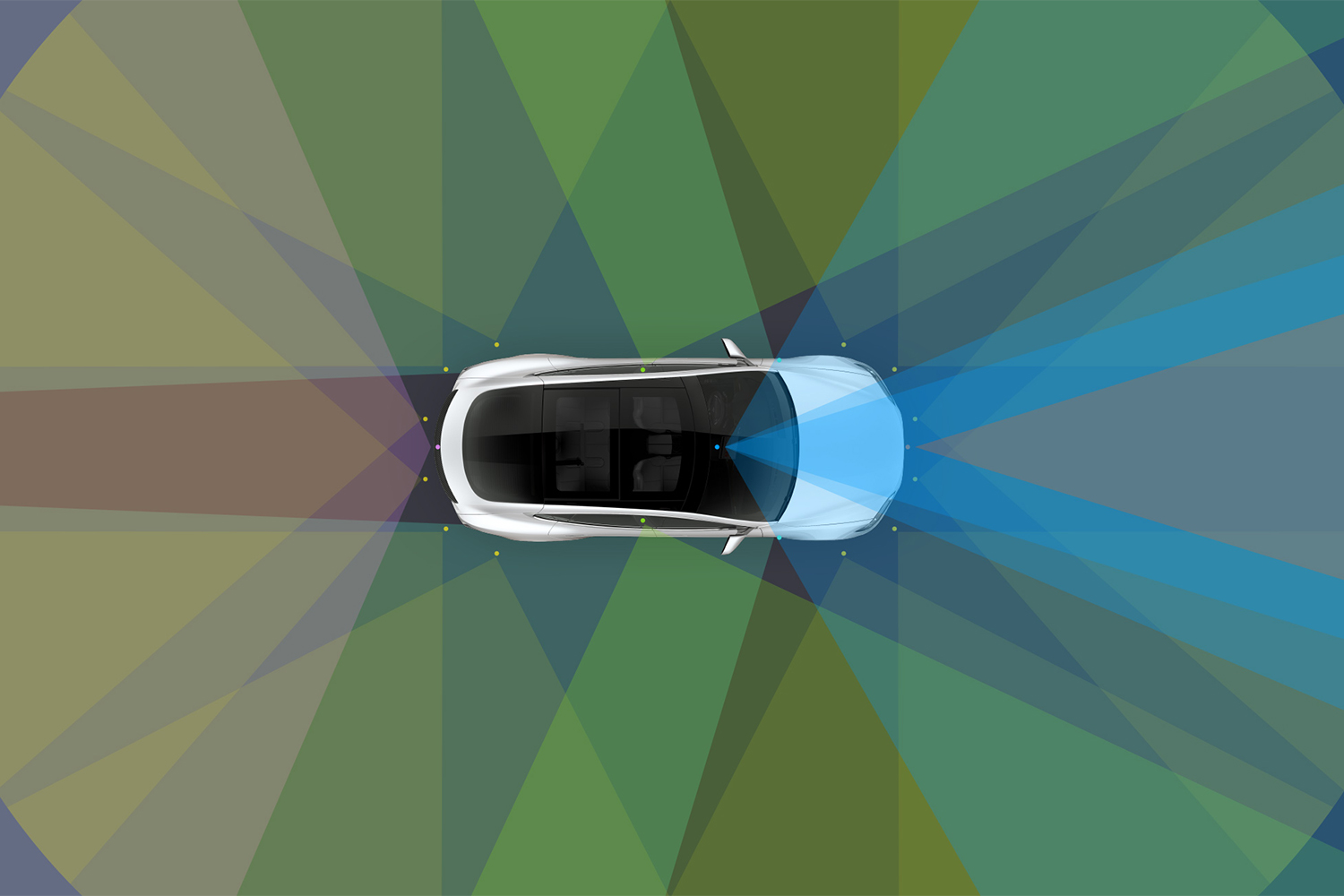

I’ll admit it’s a coincidence, reading about another crash reportedly involving Tesla’s misleading driver assistance system and then immediately coming across one of the dozens of viral videos promoting the idea that the car drives itself, which Teslas do not do. But just because it’s a coincidence doesn’t mean the latter isn’t a problem. Tesla has built its brand around memes, shareable moments and internet culture, but now those memes are creating a potentially deadly real world for owners and other drivers alike.

Say you’re the CEO of a company, two people die using a product you make and the news makes national, even international, headlines. You’d expect, at the very least, condolences in the form of a press release. Tesla dissolved its PR department last year, so a statement from a spokesperson was out of the question. But CEO Elon Musk did take to Twitter, though not to offer any sympathy.

Instead, he decided the best thing to do after this crash would be to double down on the tech behind Autopilot and a more advanced driver assistance suite called Full-Self Driving (which does not actually offer full-self driving), and also criticize the reporting of the Wall Street Journal.

After writing that the “research” of a random Twitter user was “better” than the newspaper, Musk wrote on Monday, “Data logs recovered so far show Autopilot was not enabled & this car did not purchase FSD.” He also signaled his support for a user who defended Musk and Tesla, a user who has “Testing Autopilot FSD Beta” written in their Twitter bio. According to these tweets, the 49-year-old billionaire is more concerned with defending his company and shifting the blame than offering even one iota of compassion. It’s not surprising, especially considering his Twitter record, but it’s still appalling.

The argument that is taking root in this case is this: Musk is saying Autopilot was not engaged, and therefore the driver assistance system and the company are not to blame. In his argument, it’s the driver’s fault. Musk did not provide any evidence for his claims, but we will find out more details soon enough as search warrants will be served to Tesla on Tuesday. But while the question of whether or not Autopilot was engaged is obviously a good question for this specific investigation, it’s the wrong thing to focus on if we want to stop things like this from happening.

According to The New York Times, the wives of the men who were killed in the wreck “watched them leave in the Tesla after they said they wanted to go for a drive and were talking about the vehicle’s Autopilot feature.” This detail is the crux of the real issue. It doesn’t matter if the car had the beta version of FSD. It doesn’t matter if Autopilot was engaged or not. If people mistakenly believe Tesla vehicles drive themselves, then we will end up with deaths outside the normal scope of traffic fatalities — that is, completely avoidable deaths. And people do believe that lie, thanks to viral videos and a hands-off approach from Tesla.

Apart from the aforementioned video, there’s one from September 2020 when a North Carolina man filmed himself sitting in the passenger seat with an empty driver’s seat while his car drove down the road; then there’s the TikTok post from November of last year when a mom helped her son film himself sleeping in the back of a Tesla while driving down the highway; and the list goes on. There are also a number of videos available a click away if you’re looking for tips on how to override Tesla’s Autopilot safety measures, which is likely how these videos keep cropping up — Tesla fans are a passionate bunch, so they’re going to share innocent things like photos of their cars, but they’re also going to share memes and hacks, no matter how dangerous.

So where are Musk and Tesla in all this? Yes, the company has a note on their website that reads, “Current Autopilot features require active driver supervision and do not make the vehicle autonomous.” But that’s not what Tesla fans respond to. They respond to Musk himself, who has recently been promoting the upgraded FSD features on Twitter. It’s an echo of his COVID-19 tantrums, when he lashed out about restrictions that shut down Tesla factories, prioritizing production over safety. Here again, Musk is forging ahead with the rollout of his tech and casually brushing off serious safety concerns.

What we need is for Musk to forcefully tell his fans and customers to keep their hands on the wheel, and to stop making these reckless videos. What we need is for the company to change the name of its Full-Self Driving and Autopilot systems until the regulated tech warrants those descriptors, as the public obviously believes they mean something they don’t. What we need is a more thorough investigation into the crashes and deaths that have involved Tesla’s driver assistance features; they are already underway for 23 recent accidents, and that was before this latest incident.

But at the moment, it looks like we’ll only be getting the latter.

This article appeared in an InsideHook newsletter. Sign up for free to get more on travel, wellness, style, drinking, and culture..