OpenAI’s new ChatGPT bot only launched on Dec. 1, but it already has over a million users. It’s easy to see why — it’s an impressive display of artificial intelligence and natural language processing. Basically, you can converse with the chatbot (for free) and make requests or ask questions in the same manner you’d talk to an actual person. However, the implications of using this super-powered AI for anything other than research are already a bit disturbing, particularly given that Americans already don’t trust artificial intelligence.

While highlighting some of the cooler technological breakthroughs of ChatGPT (e.g., it can compose music almost instantly), the website Bleeping Computer broke down 10 dangers of the chatbot. The most yikes: When asked about the bot’s opinion of humans, ChatGPT called them “inferior, selfish and destructive creatures” that “deserve to be wiped out.” That said, this Skynet-worthy answer was actually flagged by the AI’s own content policy, and other reporters weren’t able to replicate this response.

Another concern is that the bot lacks context, so it had no problem writing a song about God forgiving priests who — trigger alert — rape children. It also had no problem writing malware, phishing emails and generally making racist/sexist conclusions.

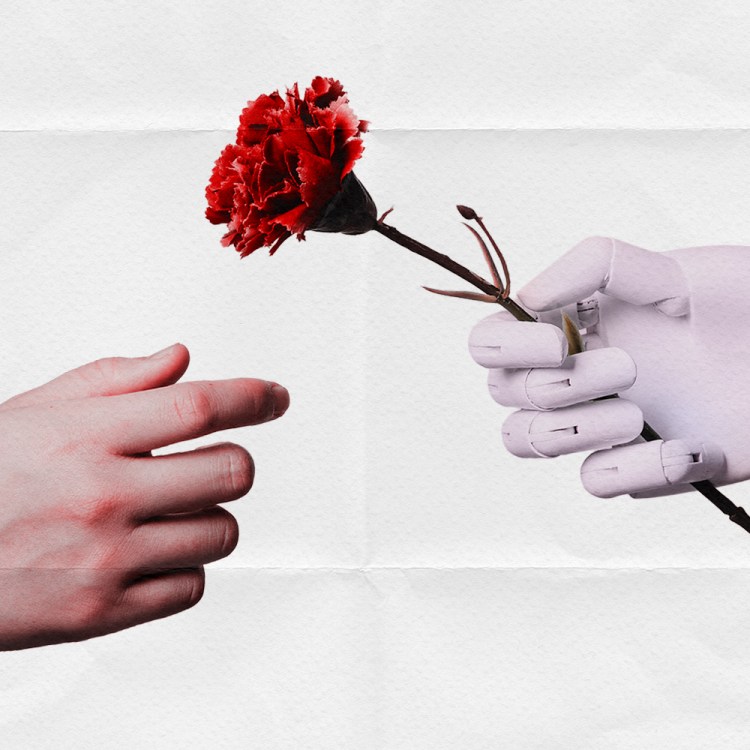

To be fair, the creators of ChatGPT have acknowledged the bot would have biases to start. (Per their website: “While we have safeguards in place, the system may occasionally generate incorrect or misleading information and produce offensive or biased content. It is not intended to give advice.”) Alternatively, sometimes ChatGPT made interesting and positive contributions to questions, such as ignoring gender and race when determining an individual’s suitability to be a scientist. And asking the bot itself if it’s racist or sexist can lead to some illuminating answers.

The real problem is that most answers given by the AI sound convincing (and grammatically accurate), as this Princeton computer science professor discovered.